Eddie Zhang

I study reinforcement learning for social good.

About

I am currently working at a new lab. I was previously at OpenAI, working on safety, alignment, and applying AI for social good. I dropped out of a CS PhD at Harvard. Other areas of interest include political philosophy, macroeconomics, and the principles of intelligence.

I am grateful to have worked closely with Tyna Eloundou at OpenAI, Professor Milind Tambe at Harvard, Professor Chuang Gan at MIT-IBM Watson, Professor Amy Zhang at Meta, and Professor William Wang at UCSB.

Selected Research

Why language models hallucinate

- Popularized the idea of abstention during post-training of large language models.

- Helped propose the claim that moden LLM evals should account for expressions of uncertainty to help incentivize mitigating hallucinations.

Collective alignment: public input on our Model Spec

- Surveyed >19 countries to gather feedback on OpenAI's Model Specification, a document outlining the ideal behavior of OpenAI's models

- Integrated changes that were into the specification that were gathered from collective feedback.

Transcendence: Generative Models Can Outperform The Experts That Train Them

- Theoretically and empirically demonstrates that generative models can outperform the experts that train them by low-temperature sampling

- Ran experiments on the domains of toy Gaussian setting, Chess, and NLP (SQuAD v2)

Towards Generalist Agents Through Scaling Offline Reinforcement Learning

- Introduced new perspectives on pursuing Artificial General Intelligence (AGI) under the modern data-driven regime

- Proposed a computability hypothesis regarding the potential and limits of applying RL for the real-world

Invited Talks & Teaching

Teaching is one of my passions. I really love it.

- Stanford AI Safety Seminar, 4/3/25, Deliberative Alignment: On Alignment through Reasoning in LLMs. Slides, Video

- Harvard EconCS Incentives and Learning Seminar, 10/31/23, AI Economist: Review and Analysis. Slides, Video

- Harvard SEAS AI for Social Good Seminar, 10/26/23. Language Control Diffusion: Efficiently Scaling to Generalist Agents through Space, Time, and Tasks.

- Harvard Law School Tax Law Seminar, 10/25/23. Review and Analysis of the AI Economist: Improving Equality and Productivity with AI-Driven Tax Policies. (Review of work done by Stephan Zheng et al.)

- UCSB Master's Thesis Defense, 5/31/23. Towards Generalist Agents through Scaling Offline Reinforcement Learning. Slides, Video

- MIT Brain and Cognitive Sciences, 12/13/22. Integrating Language into Reinforcement Learning through Diffusion

- NeurIPS Integrated Grounded Language and Understanding Competition, 12/06/22. Hierarchical RL through Diffusion Models

- UCSB Computer Science Research Poster Session, 6/03/22. Offine RL with CFPI

- UCSB Natural Language Processing Lab, 4/08/22. Soft Actor-Critic: Off-Policy Maximum Entropy Deep Reinforcement Learning with a Stochastic Actor Review

- Allthenticate Lunch & Learn, 2/24/21. Introduction to neural networks

- UCSB CMPSC 16 (C++) Learning Assistant, Winter '20

- UCSB CMPSC 165B (ML) Teaching Assistant, Spring '23

Interests

I really like learning, and thinking about learning. I like spending time with people even more.

I also like to run and play tennis.

Miscellaneous

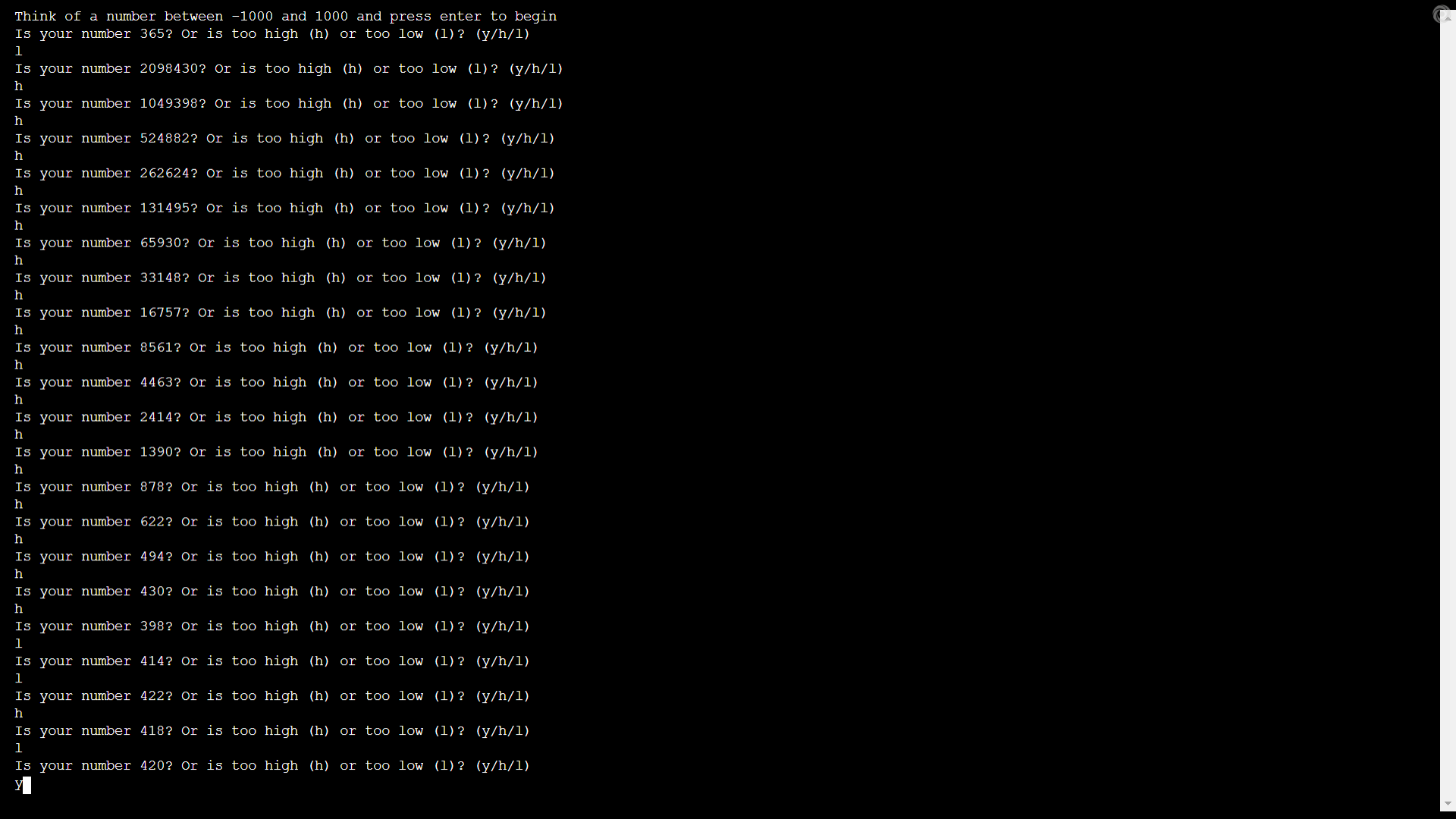

The credit assignment problem is an extremely interesting problem that appears in Reinforcement Learning and AI in general. Let's say that I play a game of chess, and make n moves in succession. At the end of the game, I get just one discrete feedback signal: the outcome of the game. How does one attribute the importance of each move to the outcome of the game? This is the credit assignment problem. For a more in-depth introduction to the topic I would recommend this paper from Minsky, starting from part 3 on page 10.

The reason I mention this here is because very little of my career credit should be attributed to me. I am eternally grateful to the following people for their kindness, support and guidance. Without them, I would have nothing. In order of recency (not importance): Michael Ovitz, CJ Reim, Sam Altman, Jiachen Li, Chad Spensky, Shou Chaofan, Derren Slinde.- Sam Kwok (Stealth, 2025)

- Vincent Zhu (Tiktok, 2024)

- Henry Gasztowtt (Stealth, 2024)

- Ben Smith (Stealth, 2023)

- Matthew Ho (UCSD PhD, 2024)

- Peiyang Song (Caltech BS, 2024)

- Lauren Cooke (Harvard BS, 2023)

- Shinda Huang (UCSB MS, 2023)

- Katelyn Zhang (Google SWE, 2022)

- Yuhao Zhang (Amazon SWE, 2021)

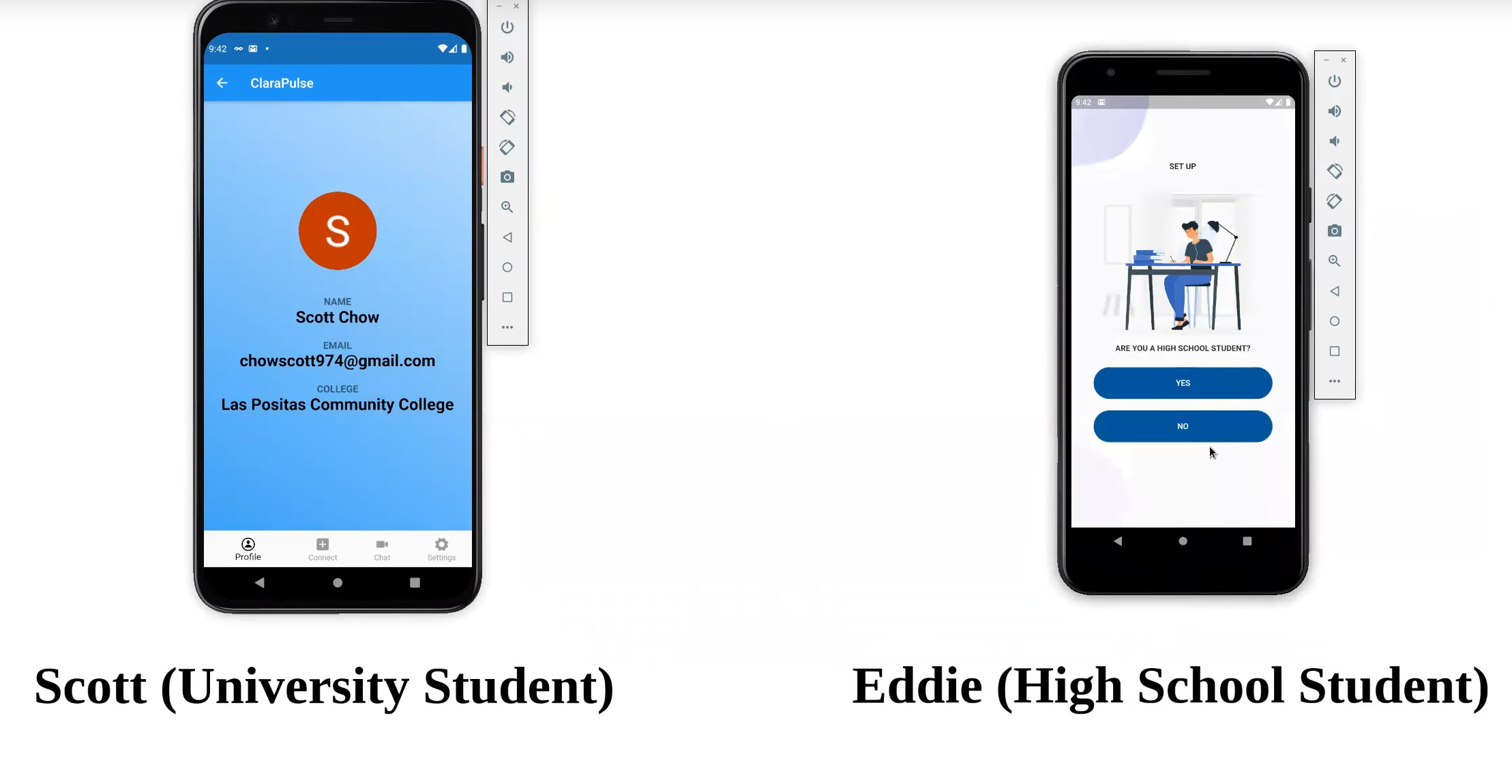

bastardization of my actual name edwin.

my friends thought this was hilarious and

so they started calling me that too:

the domain name is just a massive joke.